Abstract:

Deep learning has resulted in breakthroughs in dealing with big data, in speech recognition, computer vision, natural language processing, and many other domains. It is based on deep neural networks with structures designed for various purposes. A mathematical foundation is needed to help understand modelling, and the approximation or generalization capability of deep learning models with network architectures and structures. In this talk, Professor Zhou will consider deep convolutional neural networks (CNNs) that are induced by convolutions. The convolutional architecture identifies essential differences between deep CNNs and classic neural networks. Professor Zhou will describe a mathematical theory for deep CNNs associated with rectified linear unit activation. In particular, Professor Zhou will discuss approximation and learning capability of deep CNNs dealing with functions of many variables. <Registration>

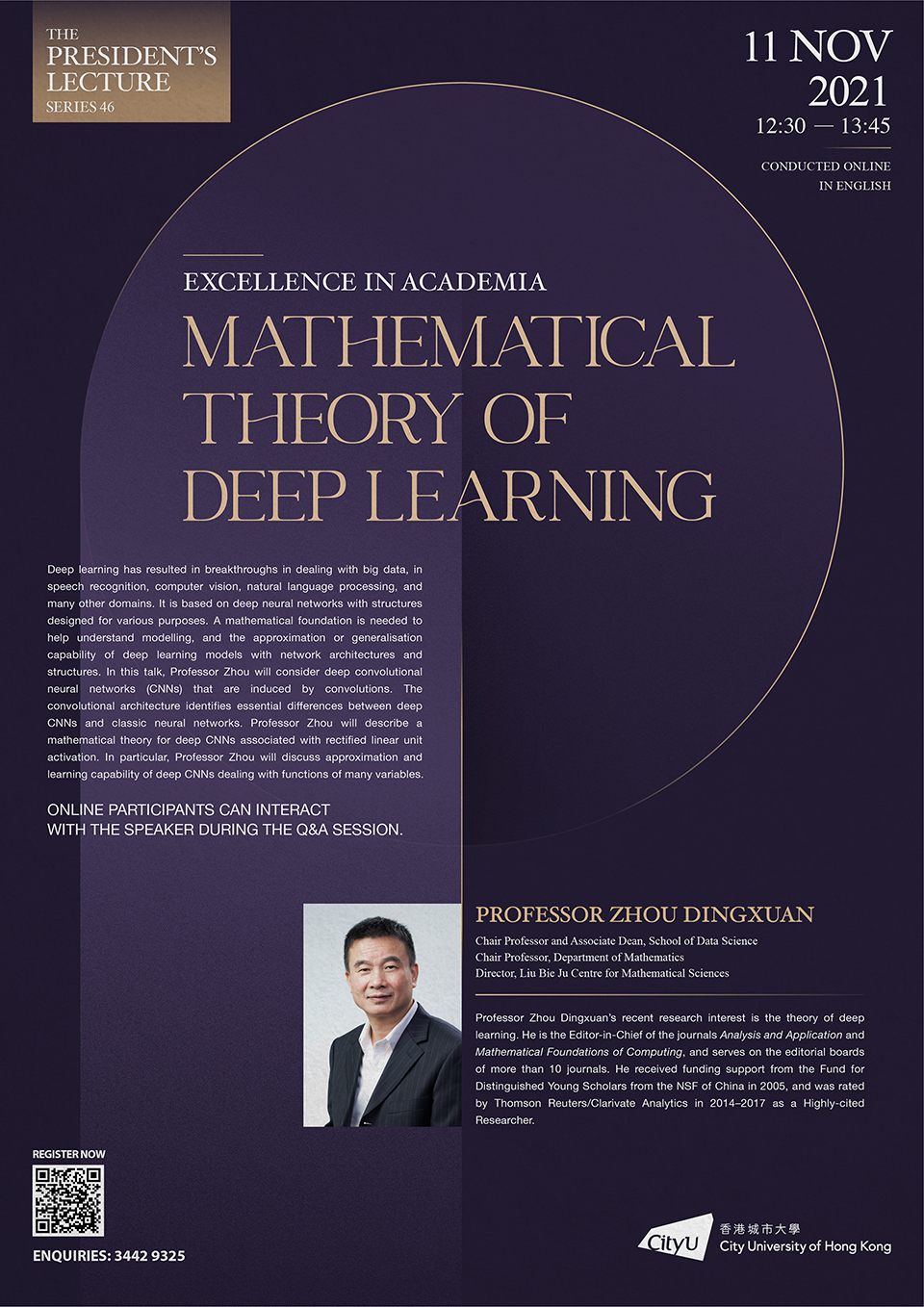

Speaker: Professor ZHOU Dingxuan

Chair Professor and Associate Dean of the School of Data Science, and Chair Professor of Department of Mathematics at CityU

Date: 11 November 2021 (Thursday)

Time: 12:30 PM – 1:45 PM

Venue: The lecture will be conducted online. Participants can participate fully, interacting with the speaker during the Q&A session.

Details: Event Poster